“Immersive” is the New Surround

Last month, we debated center vs. exploded loudspeaker clusters (FRONT of HOUSE, Sept. 2019, pages 38-39) and we also presented comments from some top sound consultants and some key manufacturers that develop/offer loudspeaker cluster/array solutions for engineered installations. The article elicited numerous responses from our readership. Consultant Richard A. Honeycutt, PhD, replied, “I mostly use center clusters with front-fills and stairstep or stage-lip speakers where possible to pull the image down, with delayed rear-fills as needed. I think line arrays are vastly overused; they work like magic in the right venues, but hit the sidewalls way too hard on a shoebox or similar room. In small rooms, I like Yamaha’s asymmetrical 2-way boxes. Danley makes a good asymmetrical system (SH50 and SH DFA) that does the trick in certain venues.”

Commenting on our “Part I” article on center clusters, published a month earlier (FOH, Aug. 2019, pages 28-29) Paul Luntsford replied “As a theater consultant, I’m increasingly frustrated by the contemporary dominance of split LR designs without the C, which can confuse the localization of voice for miked principal actors in musical theater. All the world is not just concerts — we need both LR and LCR.”

Now, let’s look at how multi-channel and surround systems have evolved over the past century.

The Origin of LCR Speakers

Using three to five speakers is hardly a new trend. LCR sound was developed in 1939 for the 1940 cinema release of Disney’s Fantasia. Of course, most cinemas were lucky to be fitted with mono sound systems just 10 to 12 years earlier. Developed by Walt Disney Productions and RCA, the “Fantasound” process employed three optically recorded discrete optical sound tracks — Left, Center and Right — and a fourth control track used for synchronization. The format used a clever steering means to route audio to as many as 100 speakers all around the auditorium. The complexity of the system and the onset of World War II led to the demise of the system. It wasn’t until the 1950’s when a process for placing multichannel magnetic stripes on both 35mm and 70mm film was developed; the Cinemascope sound format placed four channels of magnetically recorded audio on film — Left, Center, Right, Surround.

Todd-AO introduced the 6-track format for 70mm films — Left, Left Extra, Center, Right Extra, Right, Surround (See Fig. 1). Several of the largest cinemas were set up with larger systems including 70mm film projectors and five Altec A4 horn-loaded speakers behind the screen and small surround speakers.

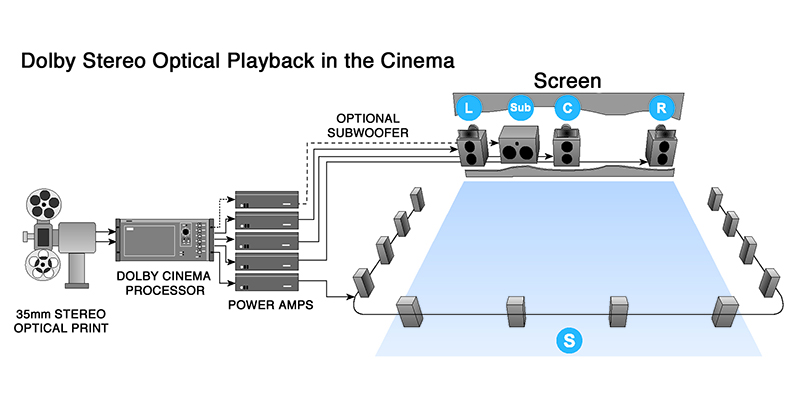

Due to the complexity and expense of creating and distributing film prints with fragile magnetic-striped soundtracks, the practice of multichannel cinema releases was limited until the 1976 release of A Star Is Born, which debuted Dolby Stereo film sound (Fig. 2). The format used phase matrixing to store LCRS onto a 2-channel format, which, in this case, put two closely spaced optical tracks on standard 35mm film. A 35mm Dolby Stereo film could be played anywhere, whether in a non-Dolby mono drive-in or in a theater upgraded with Dolby cinema decoders and a 4-channel playback system. With no appreciable cost increase in manufacturing optical stereo prints, film studios were receptive to the idea. In 1977, the success of Star Wars helped push exhibitors into upgrading to the new format, and multichannel cinema — in theaters and later, in homes — was here to stay.

A further advancement came in the form of THX, developed by Tomlinson Holman at George Lucas’ company, Lucasfilm, in 1983 to ensure that the soundtrack for the third Star Wars film, Return of the Jedi, would be accurately reproduced in the best venues. THX does not specify a sound recording format — all sound formats, whether Dolby Digital, or analog (Dolby Stereo), can be “shown in THX” – it is a quality assurance method. THX-certified theaters provide a high-quality, predictable playback environment (including acoustics, calibration and crossover) to ensure that any film soundtrack mixed in THX will sound as near as possible to the intentions of the mixing engineer.

LCR Arrays/Clusters for Live Sound (3 Mains)

As we briefly discussed last month, LCR arrays (formerly clusters) are not new — they have been the default standard in cinemas, civic auditoria, live theater and high-end church sound systems for decades. In fact, the first LCR live-sound system that I designed and installed in a large church was about 1985. Almost all national touring musical theater shows carry LCR. This is ideal when panning wireless mics worn by actors as they move about a stage, as well as panning instruments or mic arrays between channels. With LCR systems, the total direct sound pressure level received (coverage) from each of the three LCR clusters should be as nearly uniform as practical across the audience.

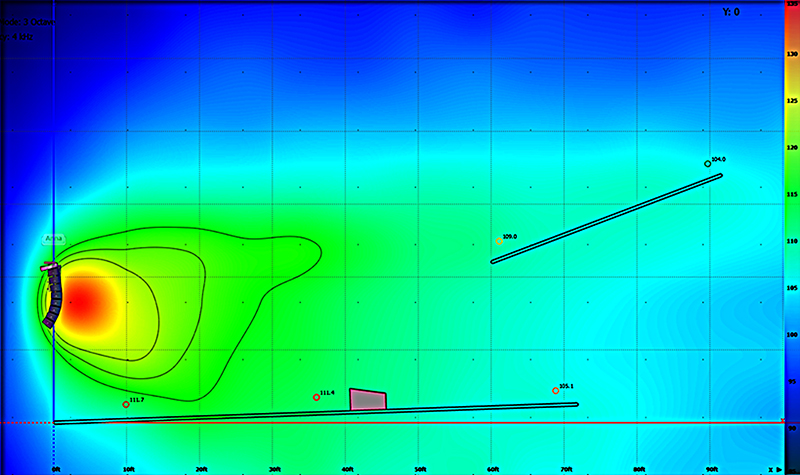

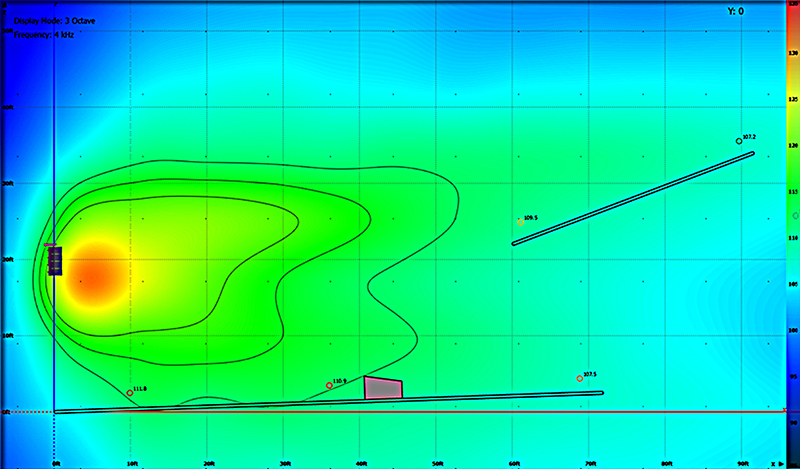

LCR array implementation is not too hard in traditional “shoebox” shaped or square venues. However, very wide and/or large venues are more challenging to cover with uniform sound levels, and delay across very large venues can be a problem. In fact, all of my last several design projects included LCR arrays/clusters. The church sound project that I designed last year (see Fig. 3) had a tight budget, but just enough to provide not only Left/Right Mains, to replace the failing L/R line-arrays, but also a center speaker cluster for uniform sound coverage and imaging to the central sound sources (speech and lead musicians). My custom, one-box end-fire main arrays — and the reason for their design — were explained in the August issue (and the directional end-fire subs were explained in the June issue, on page 66-67). In the minds of most sound consultants (myself included), LCR systems have been the gold standard for modest sized projects — up until now.

While venue sizes have grown, the large horns once commonly used in large venues have been discontinued (presumably passed over in the pursuit of better music sound quality), and SPL targets have increased; so, new technologies such as EAW’s ADAPTive beam-steered line-arrays have emerged to bring unique attributes to the table. Several of the live-sound engineers that I have talked to for this article series have been impressed with its new ADAPTive line-arrays, which can be effective in shorter line-arrays, enabling their use in central locations where conventional line-arrays would occlude sightlines. As a comparison, Fig. 4 shows a traditional 8-box J-shaped array. Fig. 5 shows a 4-box straight line-array using EAW’s ADAPTive ANNA. Fig. 6 has a LCR system with three pairs of EAW arrays, at Abundant Living Faith Center, El Paso, TX; design and installation was done by MGA, of Fresno, CA.

Jim Brown, a retired sound consultant specializing in LCR, explained “A center channel has three primary purposes. The first, and most obvious, is to provide a hard center image for a “star” or for dialog. Program material panned to the center feeds only the center cluster, as opposed to a left/right signal panned equally to left and right channels of a two-channel system. There’s no component of this signal in the side clusters to pull the image to the closest loudspeaker. The second primary purpose is to optimize intelligibility in acoustically difficult spaces by providing a dedicated channel for speech, and by reducing time smear as opposed to a two-channel system. This is important in relatively reverberant spaces. And if it is a factor, careful design of stereo systems can minimize any loss of intelligibility. The third primary purpose of a center channel is to reduce the time difference between adjacent panned signals, especially in very wide rooms. This is quite important when in very wide rooms.”

The center—channel decision comes down to a few points, but Brown added, “From the point of view of perception, it’s always desirable to have a center channel in a stereo system, but how important it is depends on a number of complex factors, and not all venues can afford a 3-channel system. So, the questions are, when faced with a budget constraint, which gets eliminated from the project first — the left/right channels or the center channel, and when do we need three channels? The answers lie in the project budget, the uses of the audio system, room geometry, and architecture.”

Quad for Live-Sound (Four Mains)

In the consumer world, quadraphonic audio was a commercial failure due to many technical problems and format incompatibilities; formats were more expensive to produce than standard two-channel stereo. Playback required additional speakers and specially designed decoders and amplifiers. In 1967, Pink Floyd performed the first-ever quad/surround sound concert at “Games for May,” a lavish affair at London’s Queen Elizabeth Hall where the band debuted its custom-made quadraphonic sound system. The technological breakthrough not only amazed but also confused the mass of stoned concertgoers.

Recently, Suzanne Ciani did a few concerts with a 4-mains quad system, but quad has not been widely used.

Ambisonics

Ambisonics was developed in the U.K. in the 1970s under the auspices of the British National Research Development Corporation. Despite its solid technical foundation advantages, until recently, Ambisonics had not been an outstanding commercial success, surviving only in niche applications and among audiophiles. However, since its adoption as the audio format of choice for virtual reality by Google, THX and other manufacturers, Ambisonics has seen a surge of interest. It is a full-sphere surround sound format — in addition to the horizontal plane, it covers sound sources above and below the listener. Unlike other multichannel surround formats, its transmission channels do not carry speaker signals. Instead, they contain a speaker-independent representation of a sound field called B-format, which is then decoded to the listener’s speaker setup. This extra step allows the producer to think in terms of source directions rather than loudspeaker positions, and offers the users a considerable degree of flexibility as to the layout and number of speakers used for playback.

In the past few years, a standard developed by the ISO/IEC Moving Picture Experts Group (MPEG) to support coding audio as audio channels has specified a new surround sound standard as ISO/IEC 23008-3 (MPEG-H Part 3), with audio objects, or Higher Order Ambisonics (HOA). MPEG-H 3D Audio supports up to 64 loudspeaker channels and 128 codec core channels. Time will tell if a variation of Ambisonics is developed for large concert venue/auditoria applications.

Cinema and 5.1+ Home Theater Surround

Starting almost a century ago with The Jazz Singer, the first feature-length “talkie” in 1927, cinema sound systems were just mono, then later, three “screen channels” of sound, from loudspeakers located in front of the audience, at the Left, Center and Right. Surround sound adds one or more channels from loudspeakers behind the listener, providing the ability to create the sensation of sound coming from any horizontal direction 360° around the listener. Surround sound formats vary in reproduction and recording methods along with the number and positioning of additional channels. The most common surround sound specification for home theater, the 5.1 standard, has five speakers plus a subwoofer. Surround sound typically has a small listener area where the audio surround effects work best and presents a fixed or forward perspective of the sound field to the listener at this location, so 5.1 surround sound has not been conducive for live events like a large concert audience or church application. Even so, in 2012, Michael Miles published a 5.1 plus delayed/zoned surround sound scheme for large concert venues.

7-22 Channel Theaters and Home Cinema

The 7.1 surround speaker configuration adds two additional rear speakers to the conventional 5.1 format for a total of four surround channels and three front channels, thus creating a more 360° sound field; it is commonly found in cinemas and on Blu-ray discs in Dolby and DTS formats. 7.1.4 surround sound along with 5.1.4 surround sound adds four overhead speakers to enable sound objects and special effect sounds to be panned overhead for the listener. It was introduced for theatrical film releases in 2012 by Dolby Laboratories under the trademark name Dolby Atmos. Sony Dynamic Digital Sound (SDDS) is an 8-channel cinema configuration with five independent audio channels across the front, two independent surround channels, and a low-frequency effects channel. 10.2 is the surround sound format developed by THX creator Tomlinson Holman of TMH Labs and the University of Southern California.10.2 refers to the format’s promotional slogan: “Twice as good as 5.1.” Advocates of 10.2 argue that it is the audio equivalent of IMAX. 11.1 sound is supported by Barco with installations in theaters worldwide. 22.2 is the surround sound component of Super Hi-Vision (a new television standard with 16 times the pixel resolution of HDTV). Developed by Japan’s NHK Science & Technical Research Laboratories, it uses 24 speakers (including two subwoofers) arranged in three layers.

Dolby Atmos Music (Immersive)

Since the ‘70s, Dolby has been developing surround sound and compression schemes for the cinema, home theater and other consumer audio markets. Dolby expressed “interest” in Ambisonics by acquiring (and liquidating) Barcelona-based Ambisonics specialist imm sound prior to launching Dolby Atmos, which does implement decoupling between source direction and actual loudspeaker positions; with support for up to 128 channels, and up to 34 separate speakers in a home theater. Atmos takes a totally different approach in that it does not attempt to transmit a sound field; it transmits discrete premixes or stems along with metadata about what location and direction they should appear to be coming from. The stems are then decoded, mixed, and rendered in real time using speakers available at the playback location. So now, Dolby is providing recording artists with a new way to express themselves in immersive audio with Dolby Atmos Music. The process lets users precisely place and move sounds in three-dimensional space with the introduction of audio objects — and with much more precision compared to traditional surround. With the addition of overhead speakers, you can create an encompassing soundstage to make listeners feel like they’re inside the experience. Premium dance clubs around the world are starting to introduce immersive audio into their venues with Dolby Atmos Music.

Octophonic for Live Sound (8 Arrays/Speakers)

The first known Octophonic electronic concert — that is, eight speakers surrounding the audience in a 360° circular arrangement, as opposed to the cubical arrangement — was John Cage’s Williams Mix (1951-53) on eight separate magnetic tapes. For over a decade, several small groups of artists and engineers around the world have been composing and performing electronic music with modern Octophonic sound systems for small groups. I don’t yet know how scalable it is for larger concert venues or auditoria.

The Wall of Sound (11 Channel Mains)

Designed by Owsley “Bear” Stanley, specifically for the Grateful Dead’s live performances in 1974; the Wall of Sound was the first large-scale line array used in modern sound reinforcement systems, although it was not called that at the time. It combined six independent sound systems using 11 separate channels of speakers, so as to deliver higher quality sound to fans. As each speaker carried just one sound, it was reportedly exceptionally clear, with less intermodulation distortion, over a very large area. When the Dead began touring again in 1976, it was replaced with a more logistically practical sound system.

Electronic Acoustics (Large Number of Small Surround Speakers)

EAS (Electronic Acoustic System) or acoustic enhancement, is a subtle type of sound reinforcement system, with room mics, used to augment direct and reverberant sound. They have been used for a few decades, in hundreds of auditoria and churches, to provide sound in a way that is natural (enveloping the audience) for acoustic performances, and to increase the reverberation on demand (to optimize the decay time for various types of program material). EAS were championed by: LARES/E-Coustic, Meyer Constellation (formerly VRAS), SIAP, Yamaha and others.

I experimented with a crude EAS in the 1980s and first learned about viable EAS in the early 90s, just before a several-year mega-church design project that I had with Southeast Christian Church (Louisville, KY). My plan was to use the Lexicon-based LARES processor, but we ultimately went with a SIAP DSP and design due largely to a higher channel count — at the time — important in a 9,000-seat venue. L-Acoustics, d&b audiotechnik and others are looking at including EAS functions as well.

Theatrical Surround Effects Systems (LCR Mains + Various Surround Speakers)

I discussed the live theater market niche with some key vendors and Arthur Skudra, an independent sound consultant, and I asked him to articulate what key players laid the groundwork for automation of surround sound/control in live theater: “We draw parallels between the live production experience and what a person enjoys in a modern movie theater. As higher resolution and increased output channel surround audio becomes commonplace in cinema, so too live audio advances to satisfy heightened audience expectations. Theatrical arts have done surround/immersive sound design for many years, acoustic transparency is king; this niche of audio is nothing new. It rests on the shoulders of those who have gone before. LCS Audio (now Meyer) developed SpaceMap software, Richmond Sound Design developed SoundMan Designer, and Out Board developed TiMax, each a unique approach to manipulating level, equalization, and time delay of discrete sources of audio in an audio matrix, to a set of outputs tied to loudspeakers around/throughout a venue.”

Operators create a set of cues to automate the playback, with movement and localization of these audio sources during a show. When you’re dealing with hundreds of performances of the same show, the time and effort to setup and program such a system to enhance the performance with immersive audio was very worthwhile. Over time, improved software tools made the creation of these cues more efficient, allowing the ability to map a venue on a computer screen (now in 3D) and with the click of a mouse (or swipe on an iPad), move the audio anywhere in the space using an object-oriented user interface. With more powerful hardware and software, we are now able to manipulate “live” sources dynamically with wireless tracking devices. It is very exciting to see more of these solutions coming to the concert sound industry; improvements in hardware and software are making immersive audio more attainable.

Recently, L-Acoustics’ L-ISA and d&b’s En-Space have also provided immersive/surround sound image effects for live theatre; combining such immersive DSP-based systems with other software-based show control platforms such as Figure 53’s Q-lab, can complete the sound solution in live theatre and multi-purpose venues. (We’ll get into this more deeply in next month’s issue of FOH).

Immersive Live-Sound (Object-Based 5 – 7 Mains + Several Surrounds)

My first exposure to Soundscape by d&b audiotechnik and L-Acoustics’ L-ISA systems was at their impressive demos at the Jan. 2019 NAMM convention. Immersive Sound — in its current form — is fairly new and has been used for a small number of tours and venue installations, but several manufacturers are now working to compete in this new market niche, to offer the newest version of surround systems for live sound.

Several festivals and tours have used this new 3D immersive audio technology to great effect. New L-ISA installations of immersive live-sound systems include: MGM Park’s 5,200-seat Park Theater in Las Vegas, currently shared by Aerosmith, Bruno Mars, Lady Gaga and Cher, along with two Princess Cruises and the Moscow Musical Theatre. New d&b Soundscape system installations include: The Shed in New York City, London’s Phoenix Theatre, the Studio City arena in Macau, China and First Assembly, Calgary.

L-Acoustics’ L-ISA (Immersive Sound Art) — the live-sound iteration is known as L-ISA Live — is considered the market leader at the moment (see Fig. 7). d&b audiotechnik has Soundscape, which is based on its Dante-enabled DS100 Signal Engine and two software modules: d&b En-Scene (for sound object positioning) and d&b En-Space (a room emulator that will add to a space’s reverberation). Alcons Audio, EAW, and Martin Audio, are promoting their 3D immersive audio technology in partnership with Dutch-based Astro Spatial Audio (an immersive software developer), along with others: Ercam, Envelop, Flux and Ina GRM. Meyer Sound Labs is gearing up a live version of its object-based mapping system, first developed for its earlier Constellation active acoustics and Spacemap spatial DSP-based sound systems — whereas many are running immersive software on a high-end Mac or PC (relying on mixer I/O). The use of tracking beacons assigned to a source or listener, makes it easy to automatically control the position of an audio object based on the location of a person or an object.

Conclusion

In researching surround and modern 3D immersive live-sound systems, it’s clear that object-based immersive software offers designers and users more options to scale the numbers of arrays/loudspeakers, based on the budget, target SPL, the size of the venue, the amount of spatial resolution desired and envelopment. Some systems use dedicated DSP, while others have immersive software program(s) running on a high-power computer — some with mixer integration. Immersive systems require considerably more speaker locations than before, yet the arrays need not be as large as a system with fewer arrays; and some allow for phased system enlargement over time.

Aside from the higher investment in equipment and personnel, my concerns with immersive systems are how the additional arrays/loudspeaker locations affect speech intelligibility and timing for music.

We will have to wait and see which of these immersive systems becomes a truly dominate industry standard.

Forum: LCR Clusters vs. L/R Line Arrays

As we conclude with LCR, multi-channel arrays and surround, I discussed design issues about sound imaging and an introduction to immersive, with some leading live-sound engineers.

Robert Scovill, Avid: “Anybody who has been following what I’ve been doing in my P.A. system designs for the past 25 years or so would understand my fondness for LCR. That said, it’s a very tricky thing to do and do well in a large concert setting for music. My approach to the C portion of LCR in large-scale sound reinforcement was to use it to create an “actual” center image as opposed to a center image from summing two split arrays. But given the inability to create an “actual” center image that would cover an entire building geometry at that scale, my goal was to make this image apparent and active for a small, but very meaningful part of the audience where localization was important; the floor seats in the center of the room. By doing it this way, it was clear we were going to need to use a 50% divergent approach, meaning anything panned “center” on the console would be distributed with equal energy to all three arrays.”

Marc Lopez, d&b audiotechnik: “As we move into more immersive systems such as the d&b Soundscape, venues are working with multiple front loudspeaker clusters/arrays (at least LCR, but typically 5 points or more depending on the venue) in order to convey their content in a more engaging manner to the entire audience than a traditional sound system is able to do.”

David McCauley, of the CSD Group: “The new market is locational audio that is more than just the LCR. What we call “Real Audio” where the timing and localizations are put on an exploded cluster style design sometimes with 360 speakers and 64-channels of discrete channels (this can be done with arrays as well) so that you have more localized sound more like what you experience in a theater production in Broadway or Vegas. Design and Deployment is the key factor, as this style of system can be complicated but worthwhile when done correctly!”

Bob McCarthy of Meyer Sound: “So what about L/R for music and center for voice? The first thing to acknowledge is that such a scheme means you are mixing in the space. The relationship of the center cluster is unique to every location in the room. This is a non-starter for a visiting mixer, so what do they do? Bus the L and R feeds into the center and lose intelligibility as a result of adding the intelligibility-superior center. For musical theater, this has applicability because these productions have extensive tech time to matrix out the coverage precisely for the space. The same can be said for HOW applications, as there are minimal variations in stage configuration or band type. LCR is extremely sensitive to spatial variation, so it requires a precise fit into the room and adherence to a strict mixing protocol. Musical theater and HOW are best suited to this paradigm. A roadhouse that with various acts would be on the opposite end. Large touring acts can go this route if they are carrying their own P.A. and playing nearly identical room shapes. If the shapes change, you get serious challenges to maintaining the spatial mix.”

Jerrold Stevens, Marsh/PMK: “The ADAPTive Array technology from EAW is a major development in loudspeaker technology. I’m doing more true LCR systems because of the control these systems offer. I think the trend is moving more toward ‘immersive’ multichannel systems, which go well beyond just stereo.”

Scott Sugden, L-Acoustics: “It is rare to see a theater show in NYC or London without a center cluster. In small theater spaces, the center cluster is usually capable of achieving coverage for most of the venue and ensures good SPL propagation for any mono signal. Challenges arise with LCR style systems, much like L/R systems, for everything that is not strictly mono. If the designer or mixer uses the L/R system for the band and mixes the drums in the center, then the usual imaging and frequency stability (comb filter) issues will be present. While the center cluster is an improvement for speaking and solo vocals, it doesn’t improve stereo music reproduction. Depending on the production, a center cluster can be an improvement, but often not the full solution. To enhance audio delivery more completely, solutions such as L-ISA technology provide signal stability (to improve intelligibility), proper localization no matter where the artist is positioned onstage and address the timing issues presented in many traditional designs.”

Steve Ellison, Meyer Sound: “Classic LCR systems have the advantage of providing even coverage and, in the right venue and application, the ability to preserve clarity of dialog and to create a sense of space. Immersive systems need to provide a wow factor to support the additional requirements of equipment needs. We’ve been providing systems that have a huge wow factor in Las Vegas showrooms, Broadway productions and more for decades with our Spacemap technology but those are fixed installations.”

Bjorn Van Munster, Astro Spatial Audio: “When concerts and operas were first performed, there was no reinforcement at all, but audiences have come to accept a stereo or LCR system. Why accept this? It’s not what was intended, and it destroys what the classical composer had in mind. The ability of an immersive system to “dissolve” into the auditorium is symbiotic with its ability to lift a production to a new level. You really miss it when it isn’t there. We do a demo with an Astro remix of Michael Jackson’s “Billie Jean,” and now when I hear the original stereo version, it sounds empty. I believe it’s not a case of if immersive audio will happen, it’s a case of when.”

With so many responses about immersive sound developments, we could not include them all in this issue, so check back next month and we’ll dig deeper in the exciting new word of immersive live sound systems. —ed.

David K. Kennedy operates David Kennedy Associates, consulting on the design of architectural acoustics and live-sound systems, along with contract applications engineering and market research for speaker manufacturers. He has designed hundreds of auditorium sound systems for churches, schools, performing arts centers and AV contractors. Reach him at [email protected].